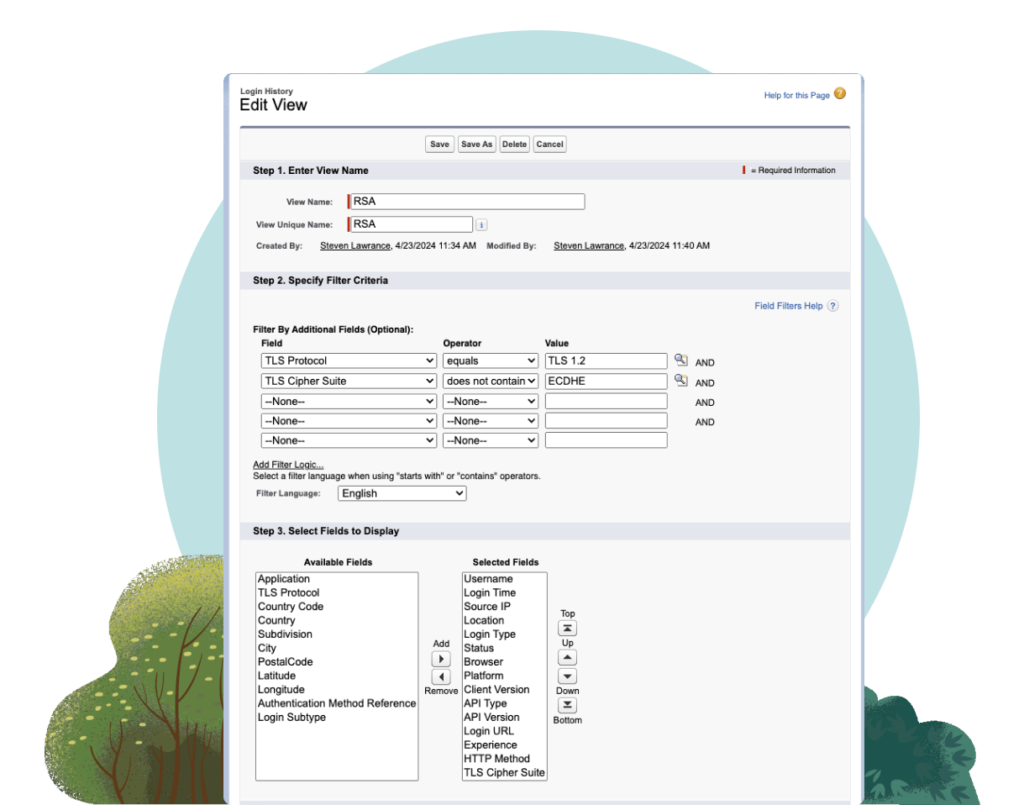

Salesforce to Replace RSA Key Exchanges with TLS 1.3

IMPORTANT: Starting May 1, 2025, Salesforce will phase out RSA Key Exchanges for TLS connections. Salesforce is enhancing Transport Layer Security (TLS) measures for customers. Starting May 1, 2025, Salesforce will no longer support RSA key exchanges for all incoming TLS connections. TLS 1.3 will become the preferred protocol for Salesforce, but TLS 1.2 will […]

Salesforce to Replace RSA Key Exchanges with TLS 1.3 Read More »