What Google I/O ’26 means for developing agents on Google Cloud

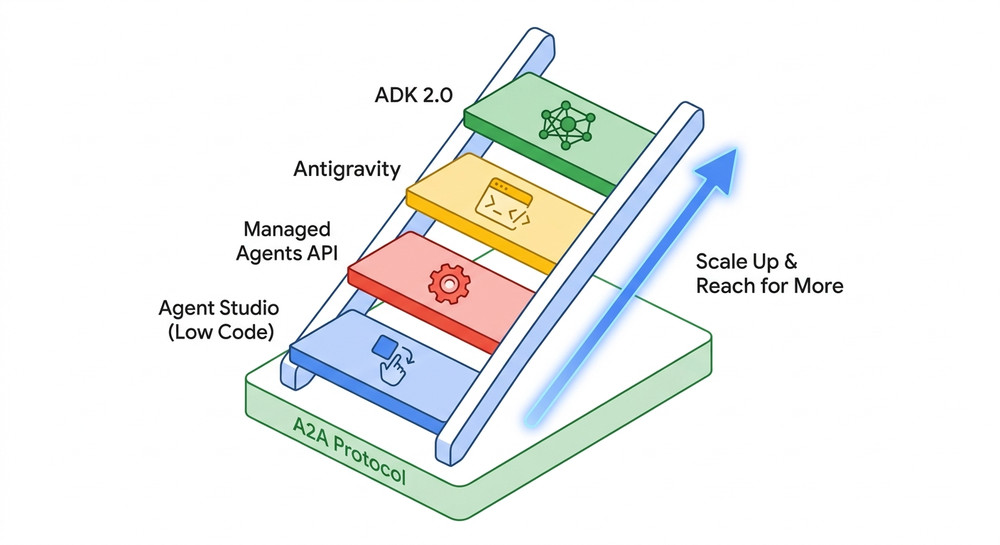

At Google I/O, we introduced a unified development toolkit featuring Antigravity 2.0 and the Managed Agents API, giving developers better ways to build locally and deploy securely to the cloud on a shared protocol layer. In this blog, we’re going to show you how Gemini Enterprise Agent Platform and the new developer tools shared at […]

What Google I/O ’26 means for developing agents on Google Cloud Read More »