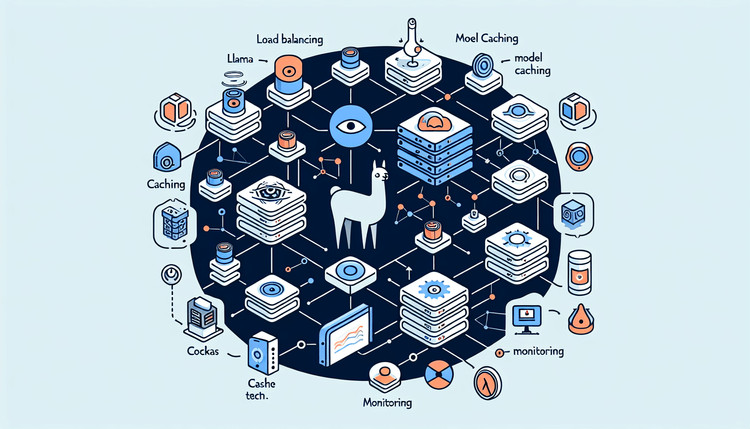

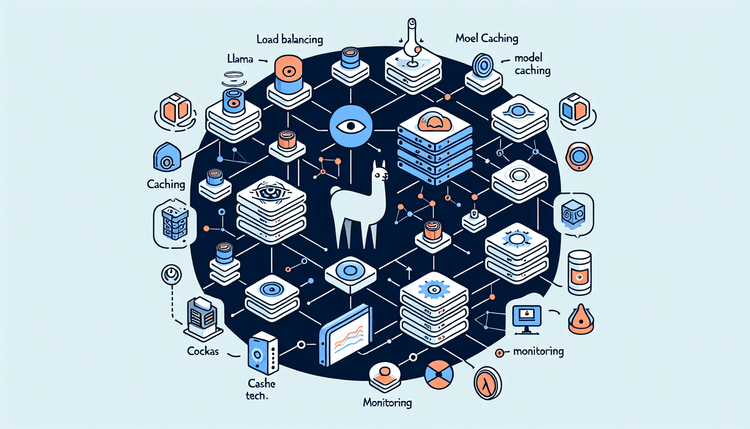

Running Llama 3 locally is easy. Running it reliably in production with load balancing, model caching, and monitoring? That requires architecture.

Continue reading

From Demo to Production: Self-Hosting LLMs with Ollama and Docker

on SitePoint.