Reinforcement fine-tuning on Amazon Bedrock with OpenAI-Compatible APIs: a technical walkthrough

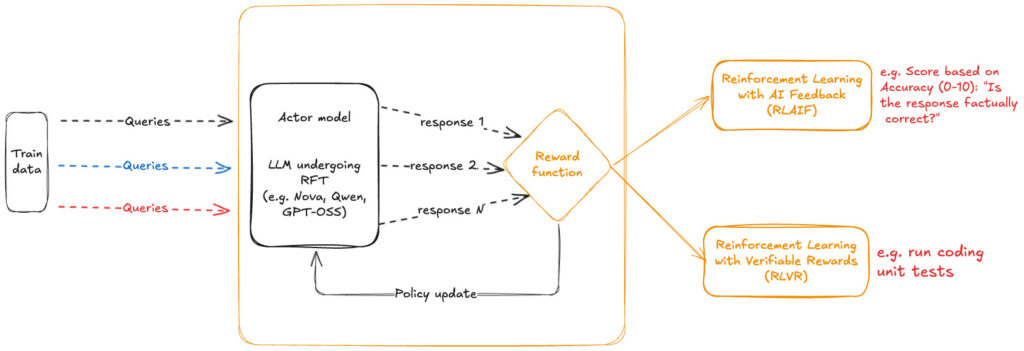

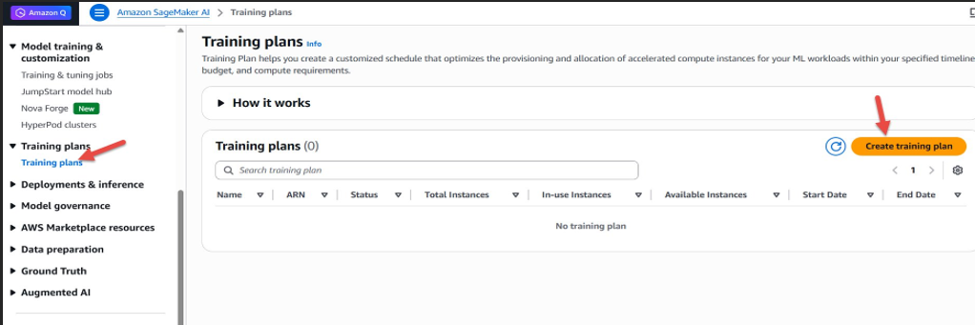

In December 2025, we announced the availability of Reinforcement fine-tuning (RFT) on Amazon Bedrock starting with support for Nova models. This was followed by extended support for Open weight models such as OpenAI GPT OSS 20B and Qwen 3 32B in February 2026. RFT in Amazon Bedrock automates the end-to-end customization workflow. This allows the […]