Over the last 18 months, “AI agents” and “retrieval-augmented generation (RAG)” have gone from niche concepts to ubiquitous, yet profoundly misunderstood buzzwords. While they’re mentioned often in strategy decks, the number of organizations successfully shipping robust, production-grade implementations remains vanishingly small.

Since 2024, I’ve been architecting and tinkering with systems that integrate agentic logic with advanced RAG pipelines in production environments — subject to the unforgiving constraints of real-time user traffic, stringent latency SLOs, and non-negotiable cost ceilings.

The stark reality is that the prevailing narrative, often centered on connecting a large language model (LLM) to a vector database via a simple API call, dangerously oversimplifies the challenge. The majority of so-called “AI engineering” has yet to graduate beyond prototypes that are little more than a ReAct loop over a vanilla ChromaDB instance. The true engineering discipline required to build, deploy, and scale these systems remains uncharted territory for most.

For organizations committed to becoming genuinely AI-native, not merely AI-curious, the following technical roadmap is critical.

1. Beyond vibes: Why your AI needs a real engineering foundation

A groundbreaking research paper on multi-hop reasoning is irrelevant when your API gateway returns 503 Service Unavailable under concurrent load. Agentic and RAG systems are distributed software systems first and AI models second. Mastery of modern software engineering is a non-negotiable prerequisite. This means proficiency in high-performance asynchronous frameworks (e.g., FastAPI, an event-driven architecture with asyncio), containerization and orchestration (Docker, Kubernetes), and automated CI/CD pipelines that handle testing, canary deployments, and rollbacks. You cannot build a reliable, fault-tolerant agent without a deep understanding of how to ship resilient, observable, and scalable microservices.

How it works in Agentforce: Agentforce abstracts away this entire layer of infrastructural complexity. It runs on Salesforce’s global, enterprise-grade Hyperforce infrastructure, meaning the challenges of container orchestration, autoscaling, and network reliability are managed for you. Instead of spending months on DevOps, your team can focus immediately on defining agent logic within a pre-built, resilient, and observable environment that is designed for production scale from day one.

2. Agents aren’t chatbots: Architecting for planning, memory, and failure

A production-ready agent is not a chatbot with a conversational memory buffer. It is a complex system requiring sophisticated architectural patterns for planning, memory, and tool interaction.

- Planning & orchestration: Simple ReAct (Reason+Act) loops are brittle. Production systems require more robust planners, often implemented as state machines or Directed Acyclic Graphs (DAGs), to manage complex task decomposition. This involves techniques like LLM-as-a-judge for path selection and dynamic plan correction.

- Memory hierarchy: Memory must be architected in tiers: a short-term context window for immediate conversation, a mid-term buffer (e.g., a Redis cache for user session data), and a long-term associative memory, typically a vector store, for retrieving past interactions or global knowledge.

- Tool use and fault tolerance: Tool interaction cannot be fire-and-forget. It demands robust API schema validation, automatic retries with exponential backoff, circuit breakers to prevent cascading failures (e.g., when a downstream billing API is down), and well-defined fallback logic. The primary engineering challenge is not making an agent sound intelligent, but ensuring it fails gracefully and predictably.

How it works in Agentforce: Agentforce provides a declarative framework for agent creation, replacing brittle, hand-coded logic with robust, pre-built patterns. You can visually design complex task flows, while the platform manages the underlying state. Memory hierarchies are a native feature, seamlessly connecting short-term context to long-term knowledge in Data Cloud. Furthermore, the tool integration framework comes with built-in fault tolerance, automatically handling retries, timeouts, and circuit breakers, ensuring your agent is resilient by default.

3. RAG’s silent failures: Hybrid search, reranking, and rigorous evaluation

The quality of a RAG system is almost entirely determined by the relevance and precision of its retrieved context. Most RAG failures are silent retrieval failures masked by a plausible-sounding LLM hallucination.

- The indexing pipeline: Effective retrieval begins with a sophisticated data ingestion and chunking pipeline. Fixed-size chunking is insufficient. Advanced strategies involve semantic chunking, recursive chunking based on document structure (headings, tables), and custom parsing for heterogeneous data types like PDFs and HTML.

- Hybrid retrieval: Relying solely on dense vector search is a critical mistake. State-of-the-art retrieval combines dense search (using fine-tuned embedding models like e5-large-v2) with sparse, keyword-based search (like BM25 or SPLADE). This hybrid approach captures both semantic similarity and lexical relevance.

- Reranking and evaluation: The top-k results from the initial retrieval must be reranked using a more powerful, but slower, model like a cross-encoder (bge-reranker-large). Furthermore, retrieval quality must be systematically evaluated using metrics like Precision@k, Mean Reciprocal Rank (MRR), and Normalized Discounted Cumulative Gain (nDCG). Without a rigorous evaluation framework, your RAG system is operating blindly.

How it works in Agentforce: Agentforce’s RAG capabilities are natively powered by the Salesforce Data Cloud. This eliminates the need to build a separate retrieval pipeline. Data Cloud provides intelligent, content-aware chunking and an out-of-the-box hybrid search engine that combines semantic and keyword retrieval across all your harmonized enterprise data. The platform includes a managed reranking service to boost precision, and provides built-in evaluation tools to ensure your agent’s responses are grounded in the most relevant, trustworthy information.

4. Composition over prompts: The new discipline of LLM system design

We have moved beyond prompt engineering as the primary skill. The new frontier is LLM system composition — the art and science of architecting how models, data sources, tools, and logical constructs interoperate. This involves designing modular and composable architectures where different LLMs, routing logic, and RAG pipelines can be dynamically selected and chained based on query complexity, cost, and latency requirements. The critical work is in monitoring, debugging, and optimizing these complex execution graphs, a practice that demands LLM-native observability tools capable of tracing requests across dozens of microservices and model calls.

How it works in Agentforce: Agentforce is fundamentally a composition engine. It allows you to visually orchestrate and chain together all the necessary components: different LLMs, RAG queries into Data Cloud, and calls to internal and external tools. The platform features a dynamic model routing engine to optimize for cost and performance. Crucially, it provides end-to-end execution tracing, giving you a complete, step-by-step view of your agent’s reasoning process, making the otherwise impossible task of debugging complex AI systems manageable.

5. The production gap: Where AI demos end and real systems begin

The chasm between a Jupyter notebook demo and a production system is defined by operational realities. Demos lack cost-per-query budgets, p99 latency targets, stringent security postures (guarding against prompt injection and data exfiltration), and the need to integrate with legacy enterprise systems. The organizations that will dominate the next decade are not those with marginally better models, but those with superior deployment velocity and operational excellence. They will have mastered model routing to balance cost and performance (e.g., using GPT-4 for complex reasoning and a cheaper, fine-tuned model for classification), implemented robust caching strategies at every layer, and built the infrastructure to safely A/B test new agentic behaviors in production.

How it works in Agentforce: Agentforce is built on the Salesforce platform, inheriting the comprehensive Trust Layer that leading enterprises rely on. This means granular data permissions, security, governance, and compliance are not afterthoughts — they are the foundation. The platform provides built-in mechanisms for agent management, performance optimization through caching, and safe deployment practices including testing. By handling these critical “last mile” production challenges, Agentforce ensures the AI systems you build are not just intelligent, but also secure, compliant, and enterprise-ready from the start.

An integrated stack for enterprise-grade AI agents

The competitive advantage in generative AI no longer lies in privileged access to foundational models, but in the engineering discipline needed to build real systems around them. Leaders are treating LLMs as a new kind of distributed, non-deterministic compute resource, with embedded agents deep within core business workflows, not just on the chat interface periphery. They are learning and iterating at an exponential rate because they are deploying at an exponential rate.

While building these systems from first principles is a monumental task reserved for the most sophisticated engineering organizations, an alternative paradigm is emerging: leveraging a fully integrated platform to abstract away this foundational complexity.

This is precisely the problem that Salesforce is tackling with the combination of Data Cloud and Agentforce. This integrated stack directly addresses the critical challenges of data grounding and agent orchestration at enterprise scale.

First, Salesforce Data Cloud acts as the hyperscale data engine and grounding layer essential for high-fidelity RAG. It solves the core problem of fragmented, siloed enterprise data by ingesting and harmonizing structured and unstructured information into a unified metadata layer. This provides a trusted, real-time, and contextually aware foundation for LLMs, transforming the chaotic “garbage in, garbage out” retrieval problem into a reliable process of grounding responses in secure, customer-specific data.

Building on this foundation, Agentforce provides the managed orchestration and trust layer for building and deploying agents. It abstracts the immense complexity of managing Kubernetes clusters, building bespoke state machines, and engineering fault-tolerant tool-use logic. Instead, it offers a secure, declarative framework for designing agentic workflows that can reliably act on the harmonized data from Data Cloud. By handling the underlying infrastructure, security, governance, and permissions, it allows engineering teams to bypass years of foundational plumbing and focus directly on designing agents that solve business problems — all within a trusted environment that enterprises already rely on.

Ultimately, this platform-based approach allows organizations to leapfrog the most difficult parts of the journey, shifting their focus from building the infrastructure to building the intelligence.

Want more content like this?

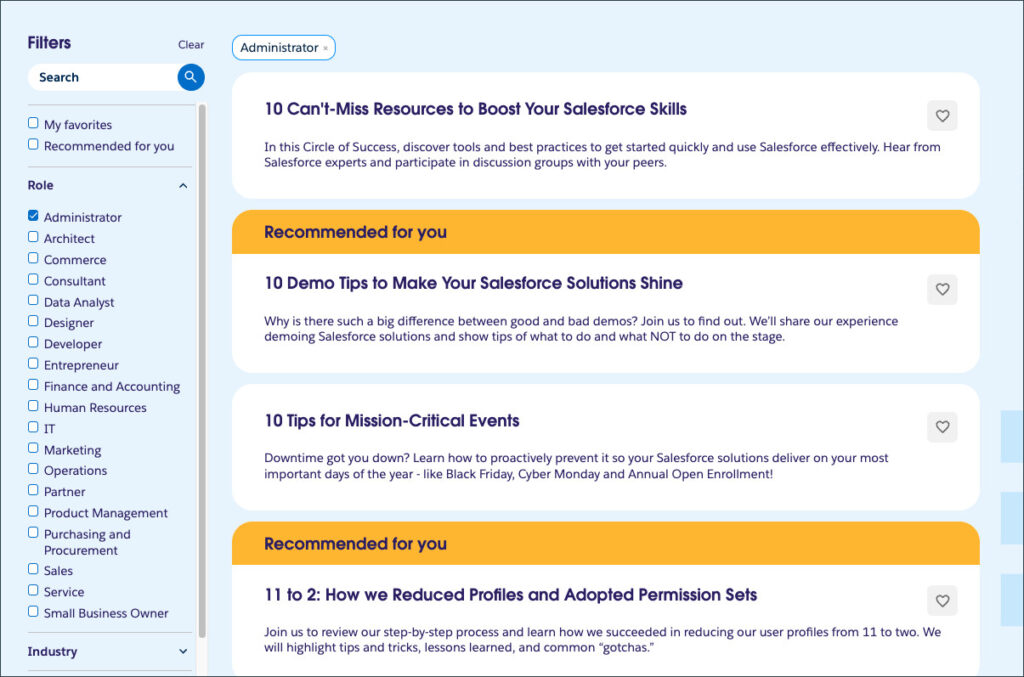

Check out the Agentforce Guide for more tips and best practices and join the Agentblazer community!