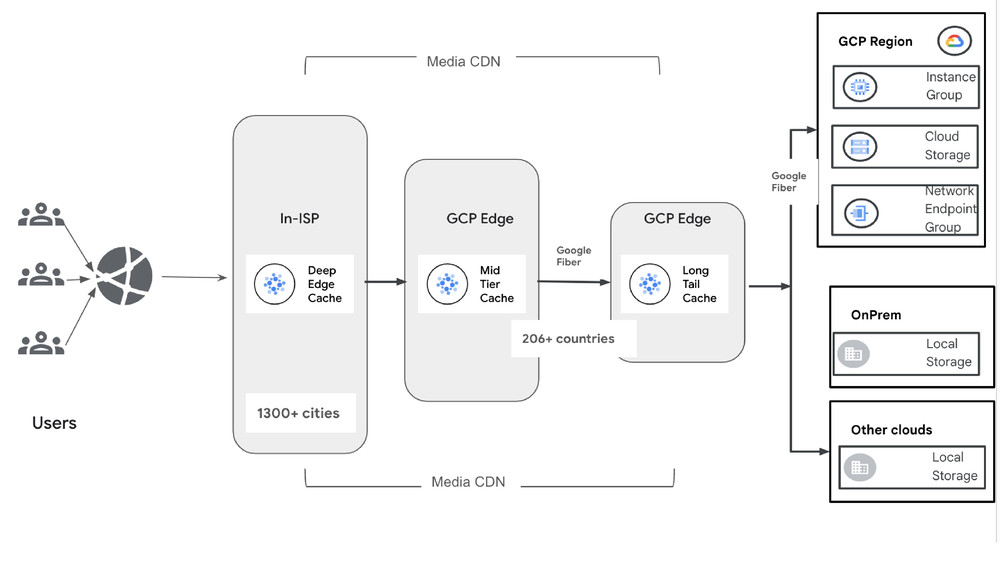

Building and serving models on infrastructure is a strong use case for businesses. In Google Cloud, you have the ability to design your AI infrastructure to suit your workloads. Recently, I experimented with Google Kubernetes Engine (GKE) managed DRANET while deploying a model for inference with NVIDIA B200 GPUs on GKE. In this blog, we will explore this setup in easy to follow steps.

What is DRANET

Dynamic Resource Allocation (DRA) is a feature that lets you request and share resources among Pods. DRANET allows you to request and allocate networking resources for your Pods, including network interfaces that support TPUs & Remote Direct Memory Access (RDMA). In my case, the use of high-end GPUs.

How GPU RDMA VPC works

The RDMA network is set up as an isolated VPC, which is regional and assigned a network profile type. In this case, the network profile type is RoCEv2. This VPC is dedicated for GPU-to-GPU communication. The GPU VM families have RDMA capable NICs that connect to the RDMA VPC. The GPUs communicate between multiple nodes via this low latency, high speed rail aligned setup.

Design pattern example

Our aim was to deploy a LLM model (Deepseek) onto a GKE cluster with A4 nodes that support 8 B200 GPUs and serve it via GKE Inference gateway privately. To set up an AI Hypercomputer GKE cluster, you can use the Cluster Toolkit, but in my case, I wanted to test the GKE managed DRANET dynamic setup of the networking that supports RDMA for the GPU communication.

This design utilizes the following services to provide an end-to-end solution:

-

VPC: Total of 3 VPC. One VPC manually created, two created automatically by GKE managed DRANET, one standard and one for RDMA.

-

GKE: To deploy the workload.

-

GKE Inference gateway: To expose the workload internally using a regional internal Application Load Balancers type gke-l7-rilb.

-

A4 VM’s: These support RoCEv2 with NVIDIA B200 GPU.

Putting it together

To get access to the A4 VM a future reservation was used. This is linked to a specific zone.

Begin: Set up the environment

-

Create a standard VPC, with firewall rules and subnet in the same zone as the reservation.

-

Create a proxy-only subnet this will be used with the Internal regional application load balancer attached to the GKE inference gateway

Next: Create a standard GKE cluster node and default node pool.

- code_block

- <ListValue: [StructValue([(‘code’, ‘gcloud container clusters create $CLUSTER_NAME \rn –location=$ZONE \rn –num-nodes=1 \rn –machine-type=e2-standard-16 \rn –network=${GVNIC_NETWORK_PREFIX}-main \rn –subnetwork=${GVNIC_NETWORK_PREFIX}-sub \rn –release-channel rapid \rn –enable-dataplane-v2 \rn –enable-ip-alias \rn –addons=HttpLoadBalancing,RayOperator \rn –gateway-api=standard \rn –enable-ray-cluster-logging \rn –enable-ray-cluster-monitoring \rn –enable-managed-prometheus \rn –enable-dataplane-v2-metrics \rn –monitoring=SYSTEM’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9430>)])]>

Once that is complete you can connect to your cluster:

- code_block

- <ListValue: [StructValue([(‘code’, ‘gcloud container clusters get-credentials $CLUSTER_NAME –zone $ZONE –project $PROJECT’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9b80>)])]>

Create a GPU node pool (this example uses, A4 VM with reservation) and additionals flags:

-

---accelerator-network-profile=auto(GKE automatically adds the gke.networks.io/accelerator-network-profile: auto label to the nodes)

--node-labels=cloud.google.com/gke-networking-dra-driver=true (Enables DRA for high-performance networking)

- code_block

- <ListValue: [StructValue([(‘code’, ‘gcloud beta container node-pools create $NODE_POOL_NAME \rn –cluster $CLUSTER_NAME \rn –location $ZONE \rn –node-locations $ZONE \rn –machine-type a4-highgpu-8g \rn –accelerator type=nvidia-b200,count=8,gpu-driver-version=latest \rn –enable-autoscaling –num-nodes=1 –total-min-nodes=1 –total-max-nodes=3 \rn –reservation-affinity=specific \rn–reservation=projects/$PROJECT/reservations/$RESERVATION_NAME/reservationBlocks/$BLOCK_NAME \rn –accelerator-network-profile=auto \rn–node-labels=cloud.google.com/gke-networking-dra-driver=true’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9f40>)])]>

Next: Create a ResourceClaimTemplate, which will be used to attach the networking resources to your deployments. The deviceClassName: mrdma.google.com is used for GPU workloads:

- code_block

- <ListValue: [StructValue([(‘code’, ‘apiVersion: resource.k8s.io/v1rnkind: ResourceClaimTemplaternmetadata:rn name: all-mrdmarnspec:rn spec:rn devices:rn requests:rn – name: req-mrdmarn exactly:rn deviceClassName: mrdma.google.comrn allocationMode: All’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9e50>)])]>

Deploy model and inference

Now that a cluster and node pool is setup, we can deploy a model and serve it via Inference gateway. In my experiment I used DeepSeek but this could be any model.

Deploy model and services

-

The

nodeSelector: gke.networks.io/accelerator-network-profile: autois used to assign to the GPU node -

The

resourceClaims:attaches the resource we defined for networking

Create a secret (I used Hugging Face token):

- code_block

- <ListValue: [StructValue([(‘code’, ‘kubectl create secret generic hf-secret \rn –from-literal=hf_token=${HF_TOKEN}’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9340>)])]>

Deployment

- code_block

- <ListValue: [StructValue([(‘code’, ‘apiVersion: apps/v1rnkind: Deploymentrnmetadata:rn name: deepseek-v3-1-deployrnspec:rn replicas: 1rn selector:rn matchLabels:rn app: deepseek-v3-1rn template:rn metadata:rn labels:rn app: deepseek-v3-1rn ai.gke.io/model: deepseek-v3-1rn ai.gke.io/inference-server: vllmrn examples.ai.gke.io/source: user-guidern spec:rn containers:rn – name: vllm-inferencern image: us-docker.pkg.dev/vertex-ai/vertex-vision-model-garden-dockers/pytorch-vllm-serve:20250819_0916_RC01rn resources:rn requests:rn cpu: “190”rn memory: “1800Gi”rn ephemeral-storage: “1Ti”rn nvidia.com/gpu: “8”rn limits:rn cpu: “190”rn memory: “1800Gi”rn ephemeral-storage: “1Ti”rn nvidia.com/gpu: “8”rn claims:rn – name: rdma-claimrn command: [“python3”, “-m”, “vllm.entrypoints.openai.api_server”]rn args:rn – –model=$(MODEL_ID)rn – –tensor-parallel-size=8rn – –host=0.0.0.0rn – –port=8000rn – –max-model-len=32768rn – –max-num-seqs=32rn – –gpu-memory-utilization=0.90rn – –enable-chunked-prefillrn – –enforce-eagerrn – –trust-remote-codern env:rn – name: MODEL_IDrn value: deepseek-ai/DeepSeek-V3.1rn – name: HUGGING_FACE_HUB_TOKENrn valueFrom:rn secretKeyRef:rn name: hf-secretrn key: hf_tokenrn volumeMounts:rn – mountPath: /dev/shmrn name: dshmrn livenessProbe:rn httpGet:rn path: /healthrn port: 8000rn initialDelaySeconds: 1800rn periodSeconds: 10rn readinessProbe:rn httpGet:rn path: /healthrn port: 8000rn initialDelaySeconds: 1800rn periodSeconds: 5rn volumes:rn – name: dshmrn emptyDir:rn medium: Memoryrn nodeSelector:rn gke.networks.io/accelerator-network-profile: autorn resourceClaims:rn – name: rdma-claimrn resourceClaimTemplateName: all-mrdmarn—rnapiVersion: v1rnkind: Servicernmetadata:rn name: deepseek-v3-1-servicernspec:rn selector:rn app: deepseek-v3-1rn type: ClusterIPrn ports:rn – protocol: TCPrn port: 8000rn targetPort: 8000rn—rnapiVersion: monitoring.googleapis.com/v1rnkind: PodMonitoringrnmetadata:rn name: deepseek-v3-1-monitoringrnspec:rn selector:rn matchLabels:rn app: deepseek-v3-1rn endpoints:rn – port: 8000rn path: /metricsrn interval: 30s’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9580>)])]>

Deploy GKE Inference Gateway

This install needed Custom Resource Definitions (CRDs) in your GKE cluster:

For GKE versions 1.34.0-gke.1626000 or later, install only the alpha InferenceObjective CRD:

- code_block

- <ListValue: [StructValue([(‘code’, ‘kubectl apply -f https://github.com/kubernetes-sigs/gateway-api-inference-extension/raw/v1.0.0/config/crd/bases/inference.networking.x-k8s.io_inferenceobjectives.yaml’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9f70>)])]>

Create Inference pool

- code_block

- <ListValue: [StructValue([(‘code’, ‘helm install deepseek-v3-pool \rn oci://registry.k8s.io/gateway-api-inference-extension/charts/inferencepool \rn –version v1.0.1 \rn –set inferencePool.modelServers.matchLabels.app=deepseek-v3-1 \rn –set provider.name=gke \rn –set inferenceExtension.monitoring.gke.enabled=true’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e90d0>)])]>

Create the Gateway, HTTPRoute and InferenceObjective

- code_block

- <ListValue: [StructValue([(‘code’, ‘# 1. The Regional Internal Gateway (ILB)rnapiVersion: gateway.networking.k8s.io/v1rnkind: Gatewayrnmetadata:rn name: deepseek-v3-gatewayrn namespace: defaultrnspec:rn gatewayClassName: gke-l7-rilbrn listeners:rn – name: httprn protocol: HTTPrn port: 80rn allowedRoutes:rn namespaces:rn from: Samern—rn# 2. The HTTPRoute (Routing to the Pool)rnapiVersion: gateway.networking.k8s.io/v1rnkind: HTTPRouternmetadata:rn name: deepseek-v3-routern namespace: defaultrnspec:rn parentRefs:rn – name: deepseek-v3-gatewayrn rules:rn – matches:rn – path:rn type: PathPrefixrn value: /rn backendRefs:rn – group: inference.networking.k8s.iorn kind: InferencePoolrn name: deepseek-v3-poolrn—rn# 3. The Inference Objective (Performance Logic)rnapiVersion: inference.networking.x-k8s.io/v1alpha2rnkind: InferenceObjectivernmetadata:rn name: deepseek-v3-objectivern namespace: defaultrnspec:rn poolRef:rn name: deepseek-v3-pool’), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9910>)])]>

Once complete, you can create a test VM in your main VPC and make a call to the IP address of the GKE Inference Gateway:

- code_block

- <ListValue: [StructValue([(‘code’, ‘curl -N -s -X POST “http://$GATEWAY_IP/v1/chat/completions” \rn -H “Content-Type: application/json” \rn -d ‘{rn “model”: “deepseek-ai/DeepSeek-V3.1”,rn “messages”: [{“role”: “user”, “content”: “Box A: red. Box B: blue. Box C: empty. Move A to C, Move B to A, Swap B and C. Where is red?”}],rn “stream”: truern }’ | stdbuf -oL grep “data: ” | sed -u ‘s/^data: //’ | grep -v “\[DONE\]” | \rn jq –unbuffered -rj ‘.choices[0].delta | (.reasoning_content // .reasoning // .content // empty)”), (‘language’, ”), (‘caption’, <wagtail.rich_text.RichText object at 0x7f8f884e9e20>)])]>

Next Steps

Take a deeper dive into GKE managed DRANET and GKE Inference Gateway, review the following.

-

Blog: DRA: A new era of Kubernetes device management with Dynamic Resource Allocation

-

Document set: DRANET

-

Documentation: AI Hypercomputer

Want to ask a question, find out more or share a thought? Please connect with me on Linkedin.