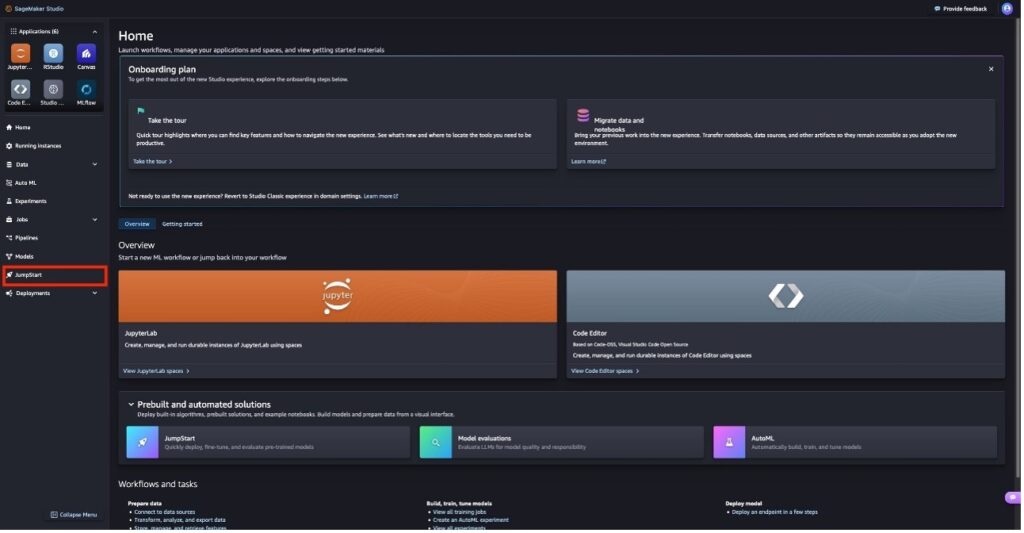

Align Meta Llama 3 to human preferences with DPO, Amazon SageMaker Studio, and Amazon SageMaker Ground Truth

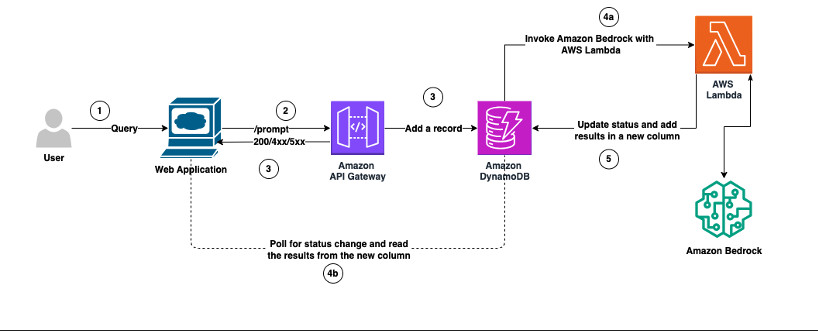

Large language models (LLMs) have remarkable capabilities. Nevertheless, using them in customer-facing applications often requires tailoring their responses to align with your organization’s values and brand identity. In this post, we demonstrate how to use direct preference optimization (DPO), a technique that allows you to fine-tune an LLM with human preference data, together with Amazon […]