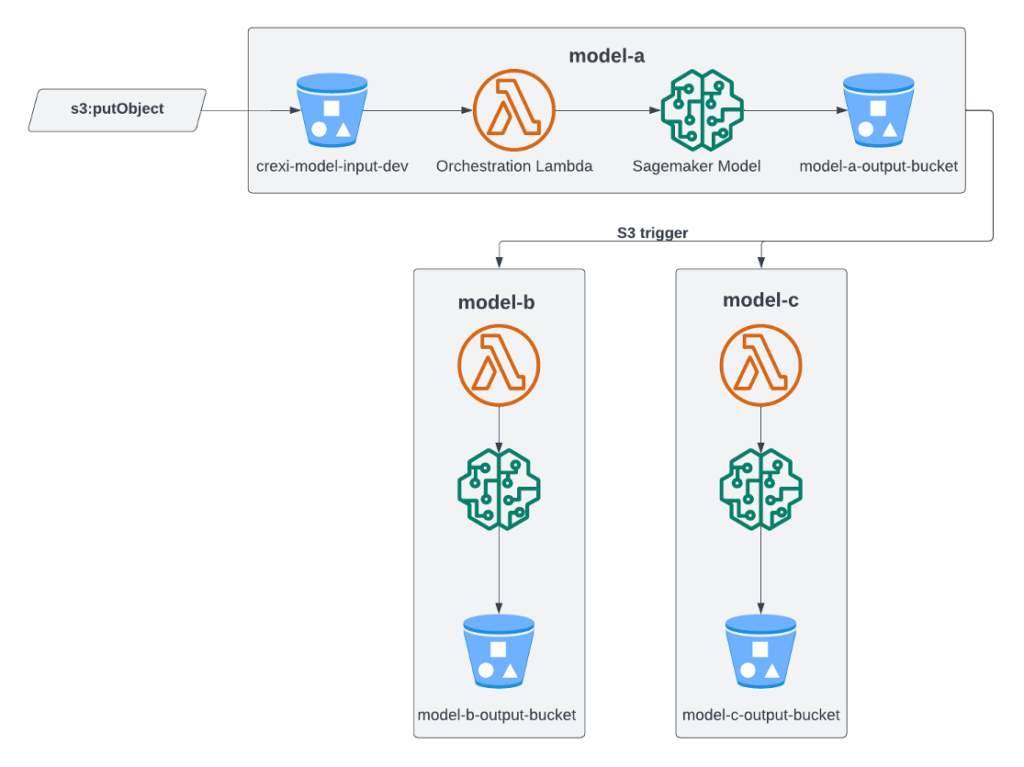

Read graphs, diagrams, tables, and scanned pages using multimodal prompts in Amazon Bedrock

Large language models (LLMs) have come a long way from being able to read only text to now being able to read and understand graphs, diagrams, tables, and images. In this post, we discuss how to use LLMs from Amazon Bedrock to not only extract text, but also understand information available in images. Amazon Bedrock […]