Run small language models cost-efficiently with AWS Graviton and Amazon SageMaker AI

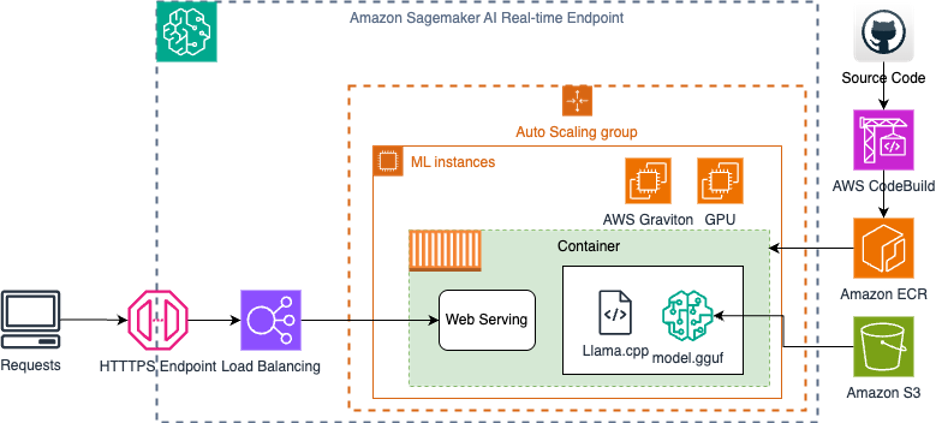

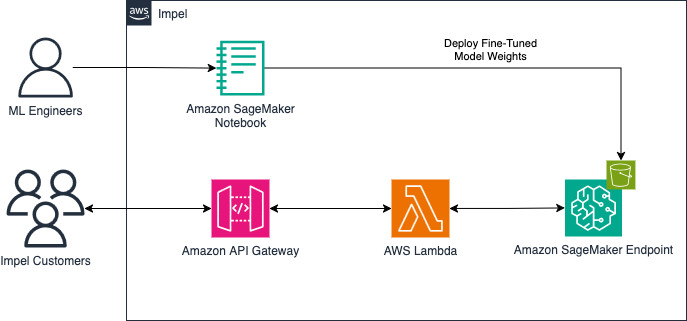

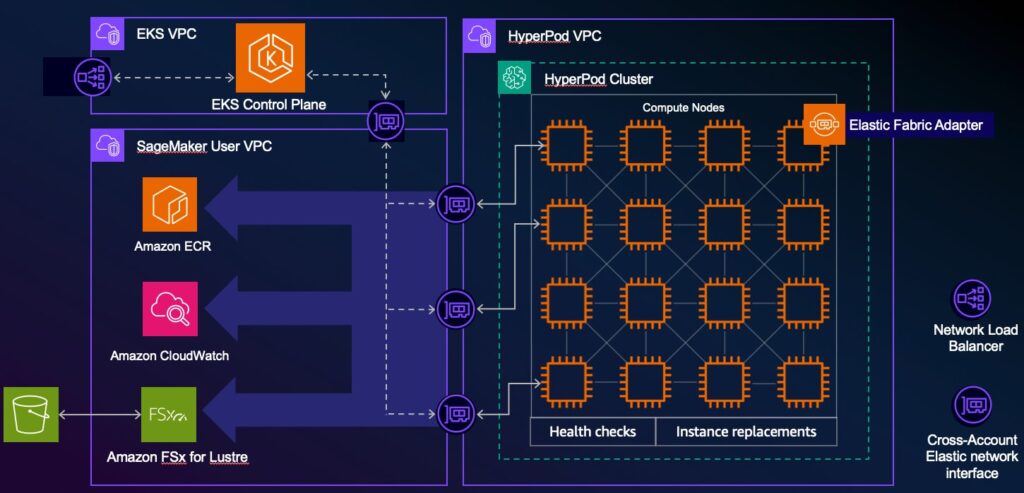

As organizations look to incorporate AI capabilities into their applications, large language models (LLMs) have emerged as powerful tools for natural language processing tasks. Amazon SageMaker AI provides a fully managed service for deploying these machine learning (ML) models with multiple inference options, allowing organizations to optimize for cost, latency, and throughput. AWS has always […]

Run small language models cost-efficiently with AWS Graviton and Amazon SageMaker AI Read More »