In freeskiing and snowboarding, traditional video replay shows you what happened during a complex aerial maneuver, but it fails to explain the physics of how it was possible. At the speed of the sport, it’s incredibly difficult to translate high-speed motion into actionable data—joint angles, rotational velocities, body compression. This requires tracking and analyzing a full three-dimensional model of the athlete, frame by frame, in real-time.

In collaboration with Google DeepMind, we built a system to provide this analysis to U.S. Olympians ahead of the Olympic Winter Games. Our AI pose estimation model transforms a single 2D video into a complete 3D biomechanical analysis, plotting 63 joints in a localized coordinate system. For athletes and coaches, it provides a revolutionary competitive edge. For broader use cases, it turns human movement into objective data.

The challenge: extreme conditions break standard vision

Generating a 63-joint 3D skeleton from 2D video is a massive computational workload. Generating it without lab-grade sensors and in unpredictable outdoor environments, pushes computer vision to its limits. Snowboarders and skiers move at extreme velocities. They wear bulky gear. When they tuck for a grab or spin, limbs disappear from view. Standard pose estimation models lose tracking the moment this occlusion occurs.

Our solution relies on a proprietary model of human motion. Instead of treating each frame in isolation, it uses learned priors to infer the position of hidden joints based on the body’s overall trajectory. This temporal reasoning maintains a stable digital skeleton even through rapid, inverted rotations.

The infrastructure: TPUs and Vertex AI

Solving occlusion is only half the battle. Delivering these insights quickly—seconds after a U.S. Olympian lands —requires heavy-duty infrastructure. We built a high-performance inference engine on Google Cloud to handle the intense MLOps demands of the competition.

The hardware foundation: TPUs

At the core of the pipeline are Google’s Tensor Processing Units (TPUs), tasked with the heaviest matrix math. An encoder first compresses the video into a latent representation, and a video transformer model predicts the 3D joint positions.

To eliminate the standard cloud “cold start” delay, we statically provisioned dedicated TPU slices for the duration of Team USA’s competition at the Olympic Winter Games. This kept the models perpetually loaded in High-Bandwidth Memory (HBM). When a video arrives, it hits a “warm” TPU, guaranteeing near-instantaneous, predictable inference without the resource contention of a multi-tenant environment.

Orchestration at scale: Vertex AI

Deploying to a single lab server is easy; orchestrating live action at the Olympic Games is not. Vertex AI provided the unified control plane to manage volume, complexity, and latency:

-

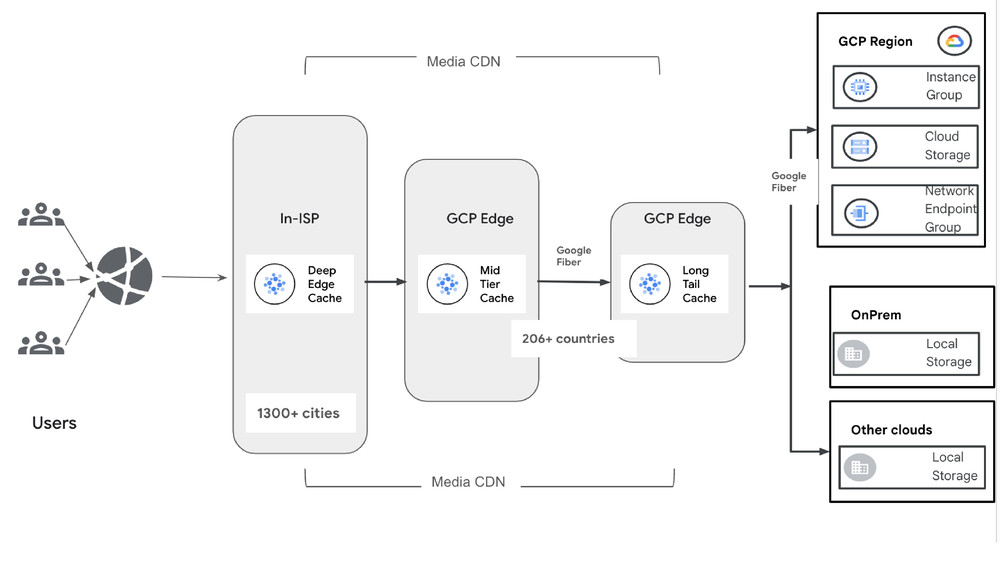

Horizontal scaling with batch prediction: Using the Vertex AI Batch Prediction API, incoming video is instantly directed to a distributed network of workers. This decouples model loading from inference, allowing the system to scale horizontally and process multiple athletes simultaneously without choking.

-

Volume and elasticity: Video analysis of U.S. Olympians is what we describe as ‘bursty’ – computational needs spike for the short duration of the athlete runs. . Vertex AI dynamically provisions resources to absorb these data spikes, rather than keeping resources always-on.

-

Security and exclusivity: To protect proprietary Team USA data, we established a Private Endpoint within a Virtual Private Cloud (VPC). Authorized traffic travels via dedicated network pathways, isolating the engine from the public internet to reduce the attack surface and minimize latency.

Beyond the snow

A system capable of reliable pose estimation under extreme winter conditions—high speeds, constant occlusion, and a requirement for speed—is a system that generalizes. We believe the underlying AI architecture, and the ability to provide generalized intelligence from structured data feeds can enable a number of use cases beyond winter athletics.

Imagine a conversational AI physical therapy coach that analyzes and helps with movement form. Or, robot assistance for a factory worker that is triggered by cues noticed in their posture. These are all potential use cases where specialized sensor AI, paired with powerful reasoning models, can provide helpful insights and actions.