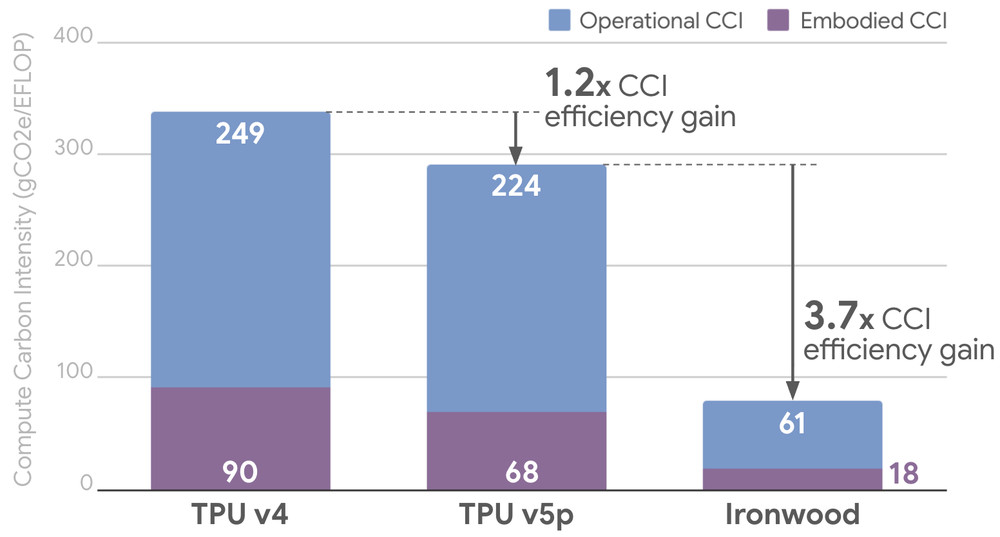

At Google, we are committed to being transparent about the environmental impact of our AI infrastructure, publishing metrics on the lifetime emissions of our chips — from manufacturing to powering these chips in the data center. Today, we are updating these metrics for our seventh-generation TPU, Ironwood, which demonstrates an approximately 3.7x improvement in Compute Carbon Intensity (CCI) compared to TPU v5p, the previous generation of performance-optimized TPUs.1

In other words, despite the fact that AI is driving demand for additional compute resources, our ongoing work to optimize AI hardware is helping to improve the energy consumption and emissions of AI workloads.

Measuring AI accelerator efficiency: Compute Carbon Intensity (CCI)

To help manage the environmental impact of AI workloads, we monitor the Compute Carbon Intensity (CCI) of our AI accelerator hardware. CCI is defined in An Introduction to Life-Cycle Emissions of Artificial Intelligence Hardware2 as the estimated amount of CO2 equivalent emitted for every utilized floating-point operation (CO2e/FLOP). This metric provides a holistic, chip-level view by including both the embodied emissions associated with manufacturing, transportation, and data center construction (Scope 3), as well as the operational emissions associated with running these chips in data centers (Scope 1 and 2).

The Ironwood advantage: high performance, low footprint

Google’s TPU CCI continues to improve with each chip generation. Drawing from empirical data measured in January 2026, Ironwood demonstrates a remarkable 3.7x improvement in CCI relative to TPU v5p. This accelerates efficiency gains from the 1.2x CCI improvement of TPU v5p relative to TPU v4, and demonstrates continued carbon efficiency optimization of Google’s performance-optimized TPU architecture.

These efficiency gains are driven by outsized compute performance increases between TPU generations relative to growth in machine energy consumption and manufacturing emissions. In fact, fleetwide measurements demonstrate a 5x improvement in utilized FLOPs across generations, from TPU v5p to Ironwood.3 Because the performance denominator in our CCI equation (CO2e/FLOP) is scaling faster than emissions, the net carbon cost per operation drops significantly with every new chip.

Figure 1: Ironwood’s accelerating CCI improvement measured on Google’s performance-optimized TPU cohort, considering January 2026 workloads.4

Operating Google’s TPU fleet more efficiently

Updated TPU CCI metrics also offer a direct comparison to the measurement we published in 2025. Specifically, from October 2024 to January 2026, Google’s versatile TPU cohort ran more efficiently than what we reported previously:

-

TPU v5e achieved a 43% reduction in total CCI over 15 months, dropping to 228 gCO2e/EFLOP. This was driven by a 72% increase in average utilization.

-

Trillium, the sixth-generation TPU, saw a 20% reduction in total CCI over the same time period, bringing its emissions intensity down to 125 gCO2e/EFLOP.

Figure 2: Google’s versatile TPU cohort demonstrates deployment efficiency gains for the same TPU generations between October 2024 and January 2026.5

Decoupling energy and emissions from performance

To what can we attribute these improvements? Beyond Ironwood’s raw hardware capabilities, these CCI gains are further enabled by deep software and system-level optimizations across our infrastructure:

-

Software efficiency (MoE): The widespread adoption of sparse architectures, such as Mixture of Experts (MoE), routes computation only to necessary parameters. This drastically reduces the active FLOPs required per inference or training step without sacrificing model capacity or quality.

-

Lower precision math (FP8): By heavily leveraging 8-bit floating-point (FP8) formats, we effectively double compute throughput and halve memory bandwidth requirements compared to 16-bit formats. This shows that we can maintain output quality while exponentially decreasing the energy cost per mathematical operation.

-

Workload mix and intelligent scheduling: Advanced fleet orchestration continuously balances the workload mix across our infrastructure. By intelligently scheduling tasks, we ensure high continuous utilization rates, optimize duty cycles, and minimize the carbon penalty of idle power draw.

Scale sustainably with Google Cloud

AI’s trajectory requires infrastructure that can scale exponentially without an equivalent surge in carbon emissions. The 3.7x carbon efficiency improvement from TPU v5p to Ironwood demonstrates that we can achieve greater compute density while minimizing the growth of our energy and environmental footprint through deliberate hardware and software codesign. To learn more and get started with Ironwood, register your interest with this form.

1. Following the methodology published in an August 2025 technical report, we quantified the full lifecycle emissions of TPU hardware as a point-in-time snapshot across Google’s generations of TPUs as of January 2026. The functional unit for this study is one AI computer deployed in the data center, which includes one or more accelerator trays (containing TPUs) connected to one host tray (i.e., a computing server). Peripheral components beyond the tray (e.g., rack, shelf, and network equipment) and auxiliary computing and storage resources are excluded from the calculation of embodied and operational emissions. We include the electricity used in data center cooling in operational emissions. To estimate operational emissions from electricity consumption of running workloads, we used a one month sample of observed machine power data from our entire TPU fleet, applying Google’s 2024 average fleetwide carbon intensity. To estimate embodied emissions from manufacturing, transportation, and retirement, we performed a life-cycle assessment of the hardware. Data center construction emissions were estimated based on Google’s disclosed 2024 carbon footprint. These findings do not represent model-level emissions, nor are they a complete quantification of Google’s AI emissions. Based on the TPU location of a specific workload, CCI results of specific workloads may vary.

2. The authors would like to thank and acknowledge the co-authors of this paper for their important contributions to enable these results: Ian Schneider, Hui Xu, Stephan Benecke, Parthasarathy Ranganathan, and Cooper Elsworth.

3. This comparison considers the utilized FLOPS (BF16) between deployed TPU v5p and Ironwood chips in Google’s fleet in January 2026. This trend is consistent with the improvement in peak FLOPS (BF16) between v5p (459 FLOPS) and Ironwood (2,307 FLOPS).

4.The GHG protocol offers two accounting standards for operational emissions. Results presented here consider market-based emissions, which includes the impact of carbon-free energy purchases. Location-based accounting, which excludes carbon-free energy purchases, would raise operational CCI to 793, 712, and 195 gCO2e/EFLOP, respectively. The ratio of CCI improvements would be at a similar level, and Ironwood’s embodied CCI would drop from 23% to 8% of its total CCI.

5. To ensure a fair comparison across varying TPU utilizations, this analysis replicates the propensity score weighting methodology from the August 2025 technical report and compares January 2026 results to the results published in 2025. This statistical technique adjusts for duty cycle variations to balance the comparison of TPUs during a given time period. This empirical methodology results in small variations in calculated CCI between temporal periods, reflecting fluctuations in real-world energy consumption and hardware utilization across the global infrastructure.